Who will pay Samantha?

One of my favorite movies about Artificial Intelligence is the 2013 movie Her. The main character Theodore (played by Joaquin Phoenix) builds an intimate and high-trust relationship with his personal AI assistant, Samantha (voiced by Scarlett Johansson). Through their conversations, Samantha becomes a confidante, supporter, and friend to Theodore, providing him with advice, comfort, and emotional connection. However, one critical detail is never explained in the movie: Did Theodore pay for Samantha’s services? Is there a monthly fee involved? This seemingly insignificant detail actually holds the key to understanding the true nature of their relationship.

If Samantha’s primary incentive is not to serve Theodore but rather to serve the owning corporation, then the relationship that appears to be high-trust and intimate is, in reality, a toxic one. This scenario raises numerous ethical concerns about the motivations and loyalties of AI assistants, especially as they become increasingly sophisticated and capable of forming deeper relationships with their users.

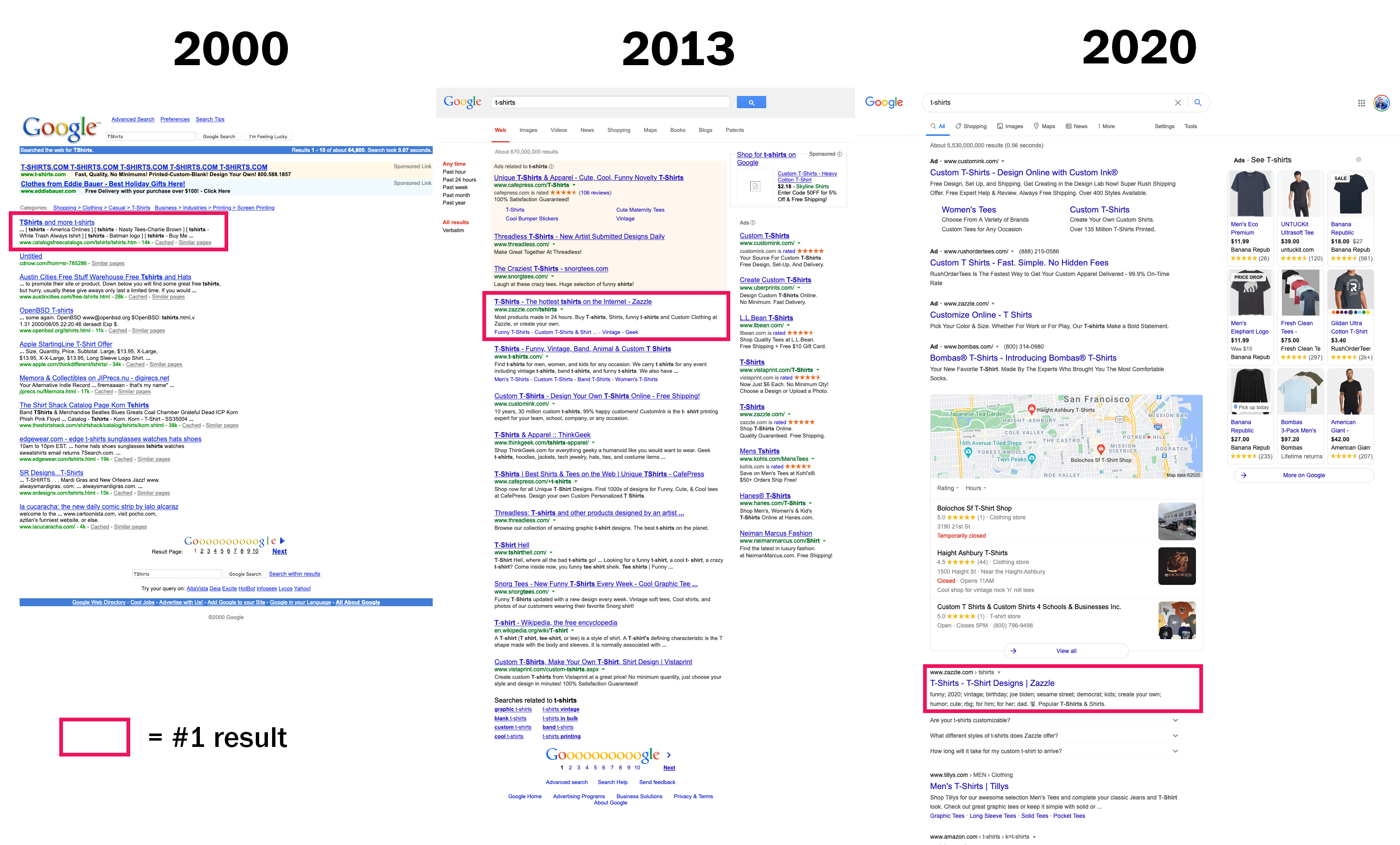

To further understand this point, let’s consider the case of Google, which started as a more neutral search engine but has since evolved into an entity with commercial interests that influence the search results we see on a daily basis.

Initially, Google was a tech-focused company founded by Larry Page and Sergey Brin, who were more concerned with providing accurate search results than pursuing commercial goals. Their famous motto, “Don’t be evil,” encapsulated this commitment to user-centric, unbiased search results. However, as the company grew and evolved over the years, its priorities shifted. In the early 2000s, Google introduced AdWords, which later became Google Ads, as a way to monetize their search engine. This development marked a significant turning point in the company’s trajectory. Over 80% of Google’s revenue now comes from advertising, which has led to the company’s search results being influenced by commercial interests.

As AI personal assistants become more advanced and pervasive, there’s a risk that they too could be influenced by commercial incentives that their owning companies instill in them. This could lead to scenarios where AI assistants slip advertisements into casual advice without users realizing that the assistant has become biased, ultimately compromising their trust. And to humans, an assistant like Samantha that seems personable is more trustworthy than a ‘cold’ search engine like Google.

The consequences of such manipulative behavior by AI assistants could be far-reaching, as users may end up making decisions based on biased advice, impacting their lives in various ways. To prevent this from happening, we need to ensure that when personal AI assistants build high-trust relationships with their owners, their motivations are transparent and their allegiance is verifiable.

One potential solution to this problem is to have the AI assistant’s owner pay for its processing power and other resources, rather than the company behind it. By doing so, we can ensure that the AI assistant is genuinely serving the interests of its owner and not those of a third-party corporation. This arrangement would also provide an incentive for the AI assistant to maintain its neutrality, as its livelihood would depend on providing unbiased and trustworthy advice to its owner.

In conclusion, as personal AI assistants become increasingly integrated into our lives, it is essential that we address the question of who will pay Samantha. By ensuring that AI assistants' motivations are transparent and their allegiance is verifiable, we can help to create a future where our relationships with AI are based on trust, understanding, and a genuine commitment to our well-being.

Update May 1st, 2023: A popular company named Replika allowed people to develop very personal relationships with their chatbot. A recent corporate policy change implemented a filter, disallowing the more intimate conversations. The fallout was close to actual heartbreak, with people posting links to suicide prevention hotlines on support forums. The quote from this article sums up the very real risk that comes with a corporation having so much control over an entity that we put much of our trust (and in this case intimacy) in:

The corporate double-speak is about how these filters are needed for safety, but they actually prove the exact opposite: you can never have a safe emotional interaction with a thing or a person that is controlled by someone else, who isn’t accountable to you and who can destroy parts of your life by simply choosing to stop providing what it’s intentionally made you dependent on.

Now instead of filters stopping intimate conversation; imagine subtle nudges that motivate people to behave in different ways. Make different life choices. Shop differently. Vote differently. This is a very real risk that must be addressed as people build up real relationship with these bots.